Infinidat Turns Digital Exhaust into Digital Fuel with Big Data Analytics

At INFINDAT we continue to see numerous big data projects operating on enterprise-class, hyperscale storage solutions like the InfiniBox, instead of “massively scalable” hyper-converged infrastructure (HCI) architectures. There is a very good reason for this, let’s explore that reason

here…

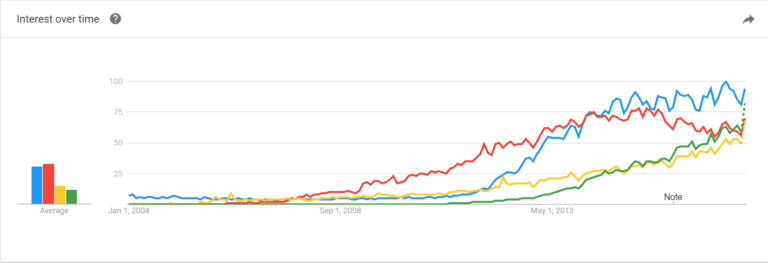

“Big data” is certainly a very popular term, which is gaining more and more interest over time as one can easily find with Google Trends:

Many people I talk to, because of the defined reference architecture for Hadoop or Splunk, associate big data with HCI and many small “white box” servers utilizing internal disks to store their ever growing amounts of data. Managing piles of cheap servers is not a simple task, and it does not work for everyone. Many other folks I talk to are using proprietary public cloud platforms like Amazon Web Service Elastic MapReduce (AWS EMR) or Elasticsearch Service (ES). But such solutions mean your data is controlled by someone else, and your monthly cost is substantial.

HCI graveyard, where white box servers/storage go to become spare parts… Photo courtesy of bbc.com

Are there better ways to store and process your valuable data? Of course there are!

Our customers are running numerous applications and workloads on their InfiniBox storage systems, including Big Data processing applications. We have customers connecting their Hadoop environments to InfiniBox. While the elephant deserves some attention, I will cover that in a future post.

In this post, I will discuss Splunk® Enterprise and Elastic – two somewhat similar analytic platforms used by our customers to process their machine-generated data. Wikipedia defines this term as “information automatically generated by a computer process, application, or another mechanism without the active intervention of a human.” The surge in machine-generated information translates to a lot of data, petabytes of data, which is a perfect match for INFINIDAT storage.

Both Splunk and Elastic offer scalable solutions for storing, processing and analyzing large amounts of machine-generated data. Let’s define some of the critical components using Splunk Enterprise as an example. A Splunk Enterprise instance that handles search management functions, directing search requests to a set of search peers and then merging the results back to the user is a search

head. It distributes search requests to other instances, called search peers, which perform the actual searching, as well as the data indexing.

Distributed search provides horizontal scaling so that a single Splunk Enterprise deployment can search and index arbitrarily large amounts of data. An indexer is a Splunk Enterprise instance that indexes data, transforming raw data into events and placing the results into an index. It also searches the indexed data in response to search requests. Indexer also frequently performs the other fundamental Splunk Enterprise functions.

Typical Splunk architecture with integrated storage

When one of our telco customers was planning their big data environments, they considered several options but decided to go with Elastic. They’ve started small, with just a two node cluster and eventually grew to an environment with over 20 indexers, processing billions of records daily.

Based on the initial performance requirements they considered SSD-based internal storage for the indexer nodes, but quickly found this configuration to be too rigid and prohibitively expensive. They decided to try running it on an InfiniBox. “It was running like a dream” the customer commented – thanks to the InfiniBox architecture with massive DRAM and SSD caching in front of spinning disks.

Decoupling compute from storage simplified scaling and upgrading the cluster. Deploying Elastic farms with local disks means every time a server has to be upgraded, the storage has to be upgraded also. It makes migration hard and expensive… very expensive.

When the storage is separated from servers, the servers can be replaced easily – as both OS and data are external. This is a very important point, especially when considering the rate at which data grows and CPUs improve. When data is protected by InfiniRAID™, users don’t need to think about rebuild times for individual cluster nodes, and a single cluster node can easily handle much higher

capacity. Managing hundreds or even thousands of internal drives would be a big operation on its own. With reliable external storage, the customer can use a single replica per shard, reducing the overall cost and complexity of the environment by a factor of three or more.

And the result?

“A query that took 40 minutes [before implementing the InfiniBox] completes within 15 seconds now.” That’s right, switching to shared storage for Elastic sped up their queries by a factor of 160x!

Customer’s Elastic environment handling billions of records per day

This customer’s story was shared at the recent Elasticon conference in San Francisco and is also a public reference at the Elastic web site.

Big data processing is important not only for Telcos. Another customer of ours – a large insurance company – planned to enhance global security logging, effective monitoring and response capability. They decided to deploy Splunk infrastructure.

This analytic system had to be capable of ingesting 100GB of data per day per indexer and have the capacity to hold that data in the hot/warm bucket for 60 days, totaling hundreds of TB per location. It had to be able to scale across several data centers around the world.

From the storage perspective, the customer had considered two options for the search heads and indexers:

- Blade servers with up to 12TB of INFINIDAT storage per server

- 2U servers with direct-attached SAS & SSD storage

Based on further analysis by the infrastructure team, the customer decided to proceed with the 1st option – using blade servers with INFINIDAT storage. This solution allows for a smaller footprint of hardware and the ability to expand the infrastructure as application capacity requirements change. External storage capacity is also easier to increase on the external array vs local disk capacity.

The INFINIDAT architecture simplifies platforms like Splunk and Elastic. The usual approach to control the cost of big data is to divide different buckets of storage for Hot, Warm and Cold data – dealing with capacity planning, fragmentation handling, and data migration policies between the buckets. With INFINIDAT, customers can eliminate the need to use strict buckets, or expand the size of each, increasing business value.

Today, this Splunk environment includes over 800TB of data across 2 data centers worldwide, and its’ usage is growing company-wide.

For these customers, as for many others, INFINIDAT storage is the key component of their production environment and helps them to extract critical business insights from their data. To help all our customers working with big data analytics, we’re partnering with the key vendors and communities like the Splunk Technology Alliance Program.

INFINIDAT storage is an exceptional choice for big data architectures and our customers have experienced great success with such workloads. Talk to us about the value we can provide for your big data infrastructures – the answer may surprise you!