Experience faster applications, zero downtime, built-in cyber resilience, and massive savings.

InfuzeOS™

Infinidat’s unique, software-defined storage architecture

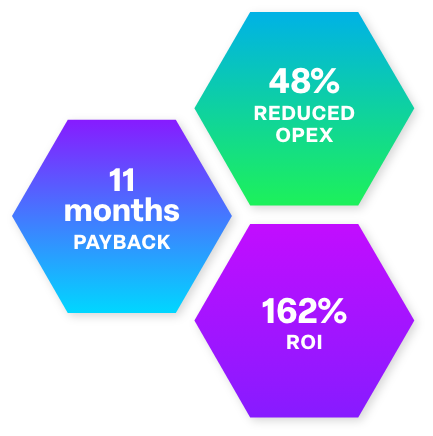

Save Millions with Infinidat

To discover the dramatic cost savings with the Infinidat enterprise storage platforms, Infinidat commissioned IDC to conduct research that explores the value and financial benefits for organizations of using the InfiniBox platform for their enterprise storage needs. The project included interviews with organizations that are current InfiniBox customers and have experience with the benefits and reduced costs of using the InfiniBox platform. Based on its analysis, IDC created a model that expresses the business value and costs of utilizing the InfiniBox platform. The outcome of this business value analysis is compelling for any organization considering purchasing storage in these uncertain economic times.

![]()

Achieve unmatched performance, 100% availability, cyber resilience, and economics at petabyte scale

The InfiniBox primary storage platform sets the standard in enterprise storage for mixed application workloads.

- Guaranteed performance, 100% availability and cyber recoverability

- Unparalleled customer experience

- Set-it-and-forget-it user experience

- InfiniSafe® cyber resilience features and capabilities

Enterprise storage with consistent ultra-high performance and microsecond level latency for the best in real world application deployment

InfiniBox SSA is Infinidat’s 100% solid-state storage platform especially designed for the most performance-sensitive, mission-critical workloads.

- Highest performing storage platform available with latencies as low as 35 microseconds for unmatched real world application performance

- Comprehensive cyber resilience capabilities with InfiniSafe

- Guarantees for 100% availability, performance, and cyber resilience

Enterprise modern data protection with InfiniSafe® cyber protection technologies and unmatched performance

Ransomware, malware, and cyberattacks put your data at significant risk. Infinidat’s modern data protection and cyber resilience solution, InfiniGuard, plays an essential role in your overall cyber security strategy.

- Enterprise-class reliability and availability

- Extensive security features that ensure your data is protected at many levels

- Role-based access controls and multi-factor authentication

- Fastest possible recovery at the lowest TCO in the event of a cyberattack with InfiniSafe